Science and Mechatronics Aided Research for Teachers with an Entrepreneurship Experience (SMARTER)

2014: Week V

Prasad Akavoor and Yancey Quiñones

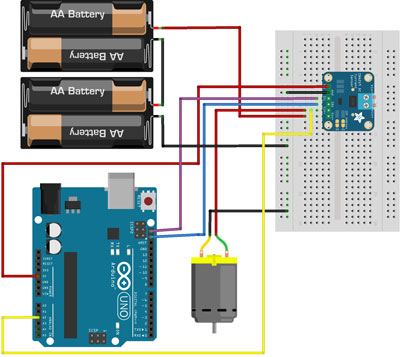

We decided to continue running the motor without the motor shield, because the shield is not central to our goals. We realized that the motor must be connected to the low end of the current sensor or it won’t read voltage! Also, we discovered that the drill press that we had hoped to use to do positive and negative work on the motor would not work because it rotates more slowly than the motors we have been experimenting with. This means that we are better off just trying to apply a mechanical load by pressing, as we had been doing, an object – a cork in our case – against the spinning axle of the motor. This way we could stall the motor if we wanted to. Driving the motor in reverse essentially puts it on the low side of the current sensor, thereby rendering the voltage readings meaningless (nearly zero). We, therefore, ran the motor only in one direction. It’s important to mention a key difference between the two current sensors we have been using, INA219 (resistor based) and ACS712 (Hall effect based). The former gives us both the current and the load voltage directly while the latter gives only the current. In order to measure the load voltage we must, therefore, connect the “high end” of the motor to one of the Analog pins on the Arduino. We did this for INA219 also just to be sure that the sensor values of load voltage and direct measurements from the analog pin were comparable. And they were! At the end of the week, we finally got the voltage and current measurements to work using both types of sensors. The currents measured with the sensors were compared to those measured by a DMM, and there was good agreement between them. We wish to point out that the DMM measured about 230 mA (or, 0.230 A), whereas INA219 measured an average value slightly larger than 0.230 A and ACS712 measured an average value slightly smaller than 0.230 A. In all, we took data for following six different cases for each of the two sensors.

- Motor with no mechanical load

- Motor with a brief mechanical load, but not stalled

- Motor stalled and not recovered

- Motor stalled for 10 s or so and then recovered by unplugging and resetting

- Motor stalled for 20 s or so and then recovered by unplugging and resetting

- Motor stalled for 10 s or so and then recovered on its own In each case, we plotted power vs. time. We note that for the ACS712 we used an op amp to amplify the output voltage and scaled it appropriately to calculate the current in the circuit.

Charisse A. Nelson and Sarah Wigodsky

This week we completed tension testing for our 1%, 5%, and 10% Hemp Composites samples and then created stress vs. strain graphs for each. From the graphs we were then able to determine the average modulus as well as the average ultimate stress. While the sample size was small the data is consistent with existing composite research. The 10% composite had the longest average modulus and thus was stiffer due to the additional hemp fibers while the 1% had the highest ultimate peak. After completing our analysis we moved on to prepping our accidental hemp foam composites as they offered new information. In order to get the samples ready for testing we: drilled out 10mm samples with the drill press and then used the precision saw and polisher to trim them down to 5mm samples. From there we looked at 3 samples from the 5% and 10% underneath the optical microscope to see the size of the air cells and how the hemp fiber moved in relation to those cells. The 1% was not used as it never foamed. Next week we will conclude our experimentation by compressing the remaining samples using static and dynamic compression.

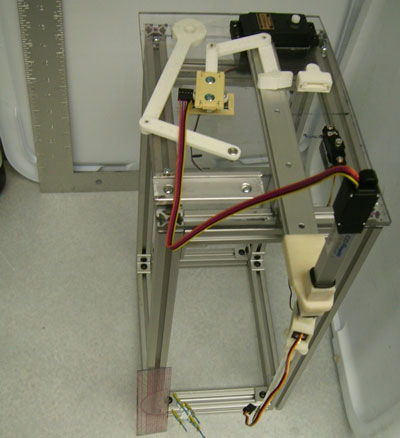

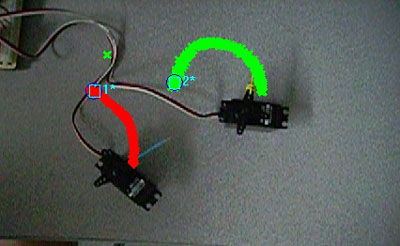

David Arnstein and Horace Walcott

This week we assembled a new bio-mimetic platform to study fish behavior, including the abaxial appendage attached to the major horizontal arm of the device. Additional plastic components required for the function of the arm were 3D printed. By means of the ProAnalyst software, tracking studies were conducted for the simultaneous operation of two motors of the major arm of the platform and the operation of the four motors of the platform. We also conducted tracking studies on the operation of the four motors for an in-house developed code for simulating the linear darting and pelvic tail flutter or twitching motion of the zebra fish. A mix up in the mail room resulted in a week waiting for parts to arrive from a vendor when, in fact, the parts were sitting downstairs. Unfortunately, this lost time resulted in a compressed build schedule. We discovered interesting design problems with the existing SolidWorks platform schematics that we will not have time to correct, but will report proposed solutions in our final paper. This experience (going from design drawings/computer modeling to actual construction) illustrated important aspects of the design/modeling/buildling engineering cycle. A similar process happened with software development for the Arduino: we started getting unpredictable behavior in our program and discovered that the program code was overlapping with variable/memory space in the Arduino. This forced us to remove comments and explanatory user text and tighten up the code. It also meant that adding additional modules to mimic natural zebra fish behavior is impossible without a complete rewrite of the current code.

Ulugbek Akhmedo

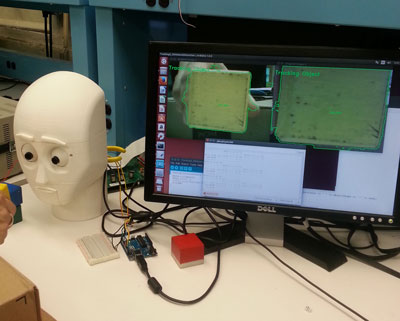

Support software development to coordinate gestures of CEASAR with voice commands.

Lisa Ali and Michael Zitolo

This week was very intense for us in the Mechatronics and Controls lab. Although we were successful at having the Arduino communicate with the ArbotiX and the computer using software serial, we noticed that the calculation of the objects’ location was incorrect. We then started brainstorming ways to trouble shoot our problem. First, we checked the coordinates that the computer was sending to the Arduino, next we checked the algorithm by which the distance was being calculated and finally we looked at the information the ArbotiX was sending to the Arduino. After elaborate testing we believe that one or both of the cameras we are using for Caesar’s eyes are not situated on the plastic platform perfectly radiantly. We think that might account for the discrepancy that we are seeing with our location readings. We did some preliminary testing and then determined that there is about a 4 degree difference. We are going to use our final week to figure out a way of getting a more accurate reading.

Lee Hollman

As of last week, the design team and I solved the issue of how to re-work CAESAR's mouth to more effectively smile and frown to convey emotions. I referenced Robodyssey's ESRA II robot, which features four servo motors that flex an elastic band, and asked if we might be able to design something similar with the two servo motors in CAESAR. Miles created a sketch based on this suggestion with two attachments to CAESAR's mouth that enable the server to stretch and bend a small band stretched across CAESAR's mouth. After creating the new mouth in the Makerbot printer, we tested various expressions. During this week, I also interviewed Julie Russell, the Educational Director at the Brooklyn Autism Center, for her feedback on our project and to see if she'd be interested in testing our robot at a future date. She concurred that the repetition of facial expressions is useful and in accord with existing autism therapies, but added that what would really be useful is to have the robot teach coping strategies for handling difficult emotions. I included her insights into a draft of the paper for the Human-Robot Interaction conference, and Ms. Russell professed interest in testing CAESAR when we're ready to do so.

Angeleke Lymberatos and Louis Morgan

The third week of working in the soil mechanics lab was certainly challenging- but we made a lot of progress. Several attempts to successfully grow germinating alfalfa seeds in a transparent medium have provided us with valuable feedback. And we made the following inferences from our observations so far

- Small, narrow container may be better to sustain plant growth in this medium. We have used different sized containers (25x18x18 cm to 7x5.5x9.5 cm) and the plants appear to thrive better in the smaller containers. This may be related to the compactness of the soil- the aquabeads can be compacted better in these small narrow containers. In some cases we added glass marbles to compact the medium even more.

- The depth of the aquifer is very critical to the plant survival. When the aquifer is too low the plant doesn’t survive. Apparently the plant roots are unable to obtain sufficient quantities of water when in direct contact with the transparent media.

- The size of the swollen aquabeads was also important as plants thrive better when using smaller beads. This is another important observation as the intact water-infused aquabeads can be up to 1.5 cm in diameter. Most of the aquabeads were used in an intact form in the transparent medium, making these particles somewhat similar to gravel in size (coarse grained) but with a somewhat viscous state. It would be interesting to see where soil engineers would classify these transparent media based on grain size- or Atterberg limits. Maybe we need to crush the aquabeads into particle sizes that better simulate soil particles.

- We will try to create a design of transparent containers for the housing the alfalfa plants that should be aesthetically pleasing! We are also thinking about infusing some of the aquabeads with colored mineral water for a stunning visual effect: creating a living art form!